Features

Every capability, from casual use to enterprise deployment. Click any feature card for technical details.

Retrieval & RAG

CoreManual (pass-through), Smart (parallel KB + memory + external recall with source_breakdown), Custom Smart (Pro — per-source weights, memory type filters). Orchestrator wraps the 22-step agent_query pipeline.

BM25s stemmed keyword index + Snowflake Arctic v1.5 ONNX embeddings (768-dim Matryoshka). Per-chunk retrieval profiles adjust vector/keyword weights adaptively (keyword 70/30 for structured docs, vector 70/30 for prose).

ms-marco-MiniLM-L-6-v2 ONNX cross-encoder. Profile-aware weights (20% CE / 80% original for keyword-strategy docs). Three modes: cross_encoder, llm_rerank, off.

19-stage adaptive pipeline (8 feature-flag conditional, 5 model-conditional). Always-on: validation, hybrid BM25+vector search, cross-domain stitching, context assembly with token budgeting, response streaming. Conditional: query decomposition (max 4 sub-queries), MMR diversity (lambda 0.7), Neo4j knowledge-graph expansion, late-interaction MaxSim reranking (ColBERT-style), cross-encoder reranking, streaming hallucination check, NLI contradiction gate. Cached at semantic (int8 quantized HNSW, 0.92 sim, 600s TTL), claim verdict, and context layers.

Pluggable external retrieval adapters (web search via Grok, custom REST endpoints). Source-annotated results flow through the same verification pipeline. Enable per-query or via Smart RAG mode's source_breakdown.

Verification

Core4 claim types (factual, recency, evasion, citation). Streaming SSE with per-claim confidence. 4-level verification cascade: KB → external data sources → cross-model (GPT-4o Mini) → web search (Grok). Monte Carlo evaluation harness with 83-claim corpus.

ClaimOverlay popovers with source attribution. Footnote superscripts with pointer-events-auto. Expert mode (Grok 4) for re-verification. Per-message verification selection.

Self-RAG agent evaluates retrieval relevance and response groundedness at inference time. ISREL/ISSUP/ISUSE grading. Triggers additional retrieval passes (up to 3 iterations) when confidence falls below threshold. Reduces hallucination without requiring a larger model.

Memory & Learning

CoreSalience formula: base_similarity × source_authority × recency_decay × access_boost × type_weight. FSRS-inspired power-law decay for decisions. Memory recall fires alongside KB query in auto-inject with 500ms timeout.

injectedHistoryRef tracks artifact:chunk pairs per conversation session. Prior-context note tells the LLM what was shown in earlier turns. History resets on conversation change.

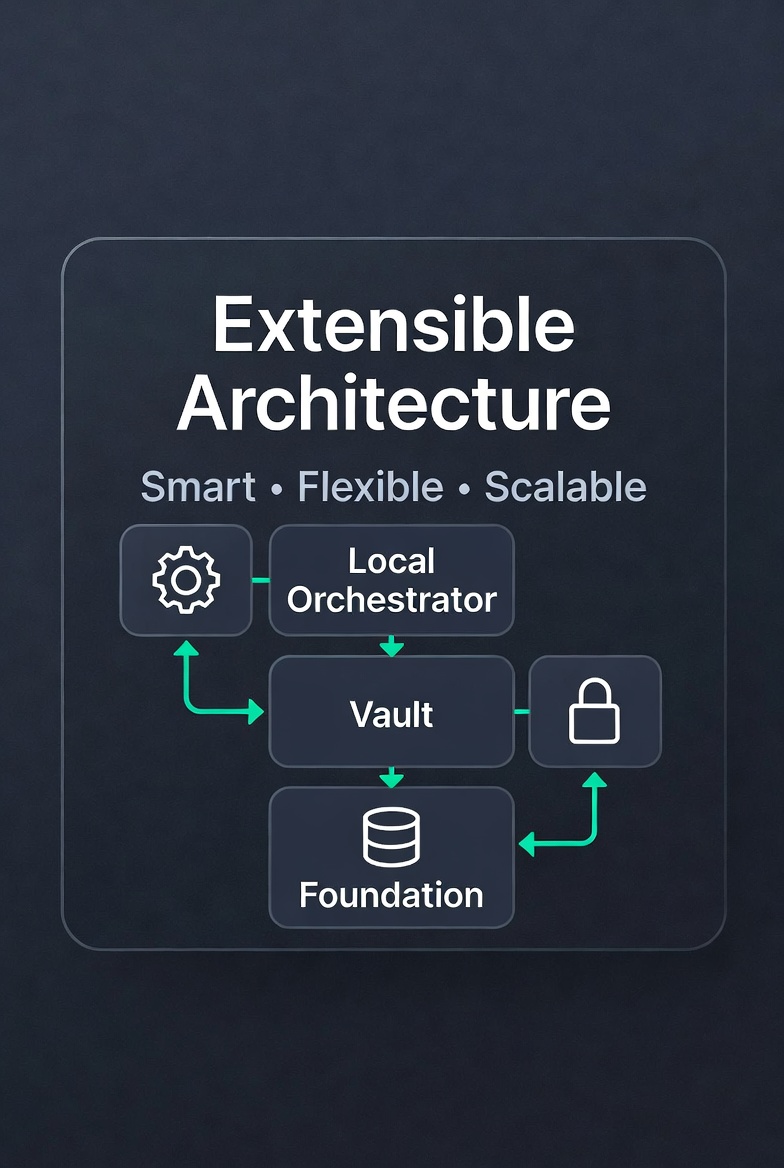

Models & Infrastructure

CoreOpenRouter multi-provider routing. Smart capability-based model scoring with three-way routing (manual/recommend/auto). Proactive model switch on ignorance detection.

Guided install wizard with copy-to-clipboard + auto-detect polling. host.docker.internal fallback for Docker↔native. 6/8 stages local (claim extraction, query decomposition, topic extraction, memory resolution, simple verification, reranking). Per-stage circuit breakers.

3-tier routing: local (Ollama, free) → mid (OpenRouter small models) → frontier (Claude/GPT-4o). Task-capability matrix maps each pipeline stage to a minimum required tier. Tier override available per-query via model_preference flag.

Circuit breakers on all Bifrost + Neo4j calls. 5-tier graceful degradation (full → lite → direct → cached → offline). Shared httpx connection pool. Distributed request tracing via X-Request-ID.

Governance & Compliance

CoreSTRICT_AGENTS_ONLY=true wires a FastAPI router-level dependency that returns 403 on every /custom-agents endpoint before the body executes — no Neo4j hit, no agent load, no runtime exposure. Read at request time so operators can flip the switch without restart. Logged at WARNING for incident-response audit. Built-in 10 specialist agents remain available.

MCP_CLIENT_MODE supports three modes: permissive (default — every configured server callable), allowlist (only servers in MCP_CLIENT_ALLOWLIST), disabled (every external MCP call denied — kill switch). Every call emits a structured INFO log with tool + server + status + elapsed time, plus a Sentry breadcrumb. Denials happen before the wire. Per-call read of both env vars; flip without restart.

Sentry breadcrumbs on every external-MCP call, swallowed_error counters via core.utils.swallowed (Redis sorted-set tracking surfaced at /health.swallowed_errors_last_hour), stage breadcrumbs on every internal LLM call (contract-tested), request_id contextvar propagation across async boundaries, tenant_id contextvar for multi-tenant isolation.

ENABLE_SENTRY env var defaults off — Sentry SDK initialization is conditional, no DSN required for normal operation. ChromaDB anonymized_telemetry=false in compose. Optional Fernet encryption-at-rest via ENABLE_ENCRYPTION. Setup-wizard env writes routed to a host bind-mounted .env so credentials persist across container rebuild without leaving the host.

Import & Management

CorePreview with estimation (chunks, storage). Archive extraction (zip/tar.gz). Junk filtering (DS_Store, temp files, Office locks, macOS resource forks). SSE progress streaming. Pause/resume/cancel. Batch limit 100.

pdfplumber with table extraction + Markdown serialization. Parsers: PDF, DOCX, XLSX, CSV, TXT, MD, EML, MBOX, EPUB, RTF. OCR/audio/vision via Pro plugins. Per-chunk retrieval profiles computed at ingest time.

KB_NAMESPACE env var. collection_name(domain, namespace) with backward-compatible legacy format. BM25 namespaced directory layout. ChromaDB batch writes (5000 max). BM25 LRU eviction at 8 domains.

Automation

ProDAG execution engine with SVG canvas. Kahn's algorithm for cycle detection. 4 built-in templates (research, summarization, Q&A, custom). Nodes: KB query, LLM call, conditional branch, memory read/write. Exportable as JSON workflow definitions.

9 AI Agents

Specialized intelligence for every task.

QueryAgent

1 / 9

Orchestrates multi-domain KB search with hybrid retrieval, query decomposition, context assembly, and reranking.

See it in action

Clone the repo and run one command. 9 specialist agents + custom-agents builder, 21 core tools + any external MCP server, 33 API routers — all yours.

Get Started